With Azure Load Balancer you can distribute the incoming requests across multiple instances of your application. The application can be deployed to virtual machines or virtual machine scale sets (VMSS). Azure Load Balancer is a network load balancer that operates at layer 4 of the OSI layer. As the requests are getting spread across the instances, you can ensure that high availability is achieved.

Both inbound and outbound traffic scenarios are supported by the Azure Load Balancer. The load balancer relies on the load balancing rules and health probes to distribute the traffic to the backend servers. The purpose of the load balancing rules is to determine how the traffic should be distributed across the backend servers. The health probes ensure that the backend server is healthy and is capable of handling the request. If the load balancer cannot determine the health of the backend server, the requests will no longer be distributed to the unhealthy server.

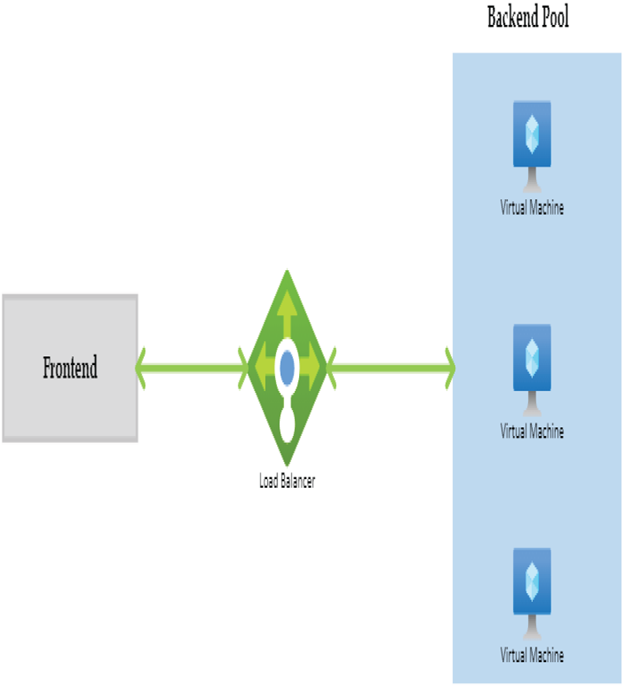

Figure 5.4 shows the high-level overview of the function of Azure Load Balancer.

FIGURE 5.4 Overview of Azure Load Balancer

There are two types of load balancers: public and internal. Let’s take a look at these types and understand the differences between them.

Types of Load Balancers

As you already know, the purpose of a load balancer is to distribute the traffic to the servers after verifying the health of the backend server. Depending upon the placement of the load balancer in the architecture, the load balancer can be categorized as a public load balancer and an internal load balancer.

Public Load Balancer

As the name suggests, a public load balancer will have a public IP address, and it will be Internet facing. In a public load balancer, the public IP address and a port number are mapped to the private IP address and port number of the VMs that are part of the backend pool. By using load balancing rules, you can configure the port numbers and handle different types of traffic. For example, if you want to distribute the incoming web requests across a set of web servers, you can deploy a public load balancer and spread the traffic. In short, an Internet endpoint is exposed in the case of a public load balancer.

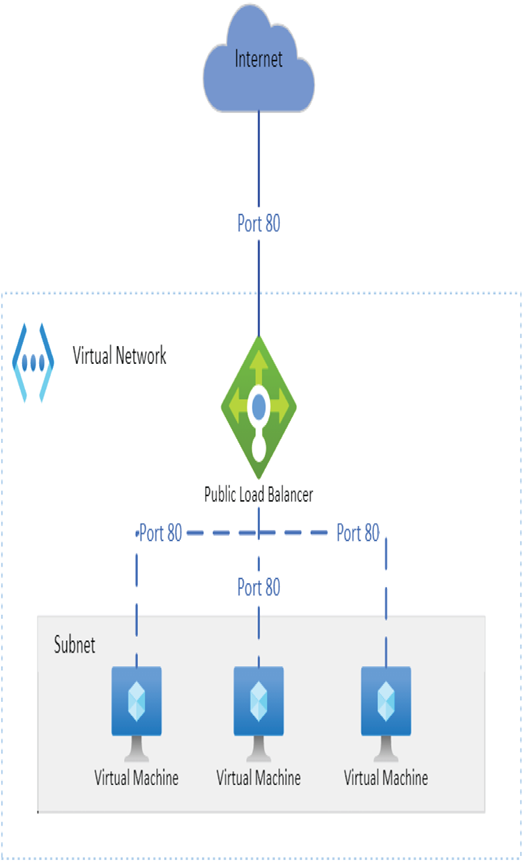

Figure 5.5 shows how the incoming web requests from the Internet are distributed across the set of web servers added in the backend.

FIGURE 5.5 Public load balancer

In Figure 5.5, you can see that all incoming requests to the front-end public IP address of the load balancer on port 80 are getting distributed to the backend web servers on port 80.

Leave a Reply